In my last post, I was thinking about what we do when we do what we do when we do performance management. And I concluded that the way we manage performance at the moment takes loads of time and does nothing to improve performance. (If you want some reflections on what ‘manage’ means anyway – visit this one).

So what about our organizational strategic management system? Do we have mechanisms for organizing our work in meaningful ways towards a shared direction? (Sorry. That is such a convoluted way to say ‘planning’. But i don’t want to say ‘planning’ as much of work is unplannable.) Do we have ways of tracking how we are doing so that we can constantly adapt and improve as projects and programmes unfold and the context of our programmes dynamically evolves?

Hmmm… I don’t think so. Here are three reasons why:

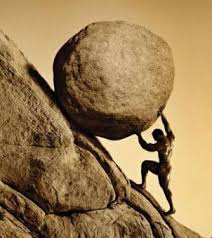

1. Timing. It is almost June and we are reporting on last year and about to ‘plan’ 2013. Now don’t get me wrong. Individuals, projects and teams have been assessing where they have been and where they want to go. The substance is there, but the accompanying document that claims that ‘this was planned in such and such a way and so it came to pass’ is retrofitted. Which takes a load of time and is pointless. And staff know it is pointless which makes them sigh, groan and roll their eyes. Not very motivating, is it? And while they are pushing that Sisyphean rock up the hill, potentially more valuable work is not being done.

2. Lack of precision in language. Let’s assume that this exercise is worthwhile and the timing is acceptable, a further obstacle is that terms are used without precision and one thing is taken for another. Take ‘indicators’ – indicators should be something you can track over time and see if and how something that interests you is changing. If I am interested in getting rid of graffiti in my neighbourhood, indicators might be the area of wallspace covered in graffiti, or the occurrence of new graffiti, or the number of walls with graffiti. What it can’t be is “30 walls remain graffiti-free” or “70% reduction in graffiti”. Those are targets. Another thing it can’t be is “area of wallspace clear of graffiti due to campaigns, policing and new lighting”. That’s a story with multiple characters! How do you know which part you are measuring? Without good indicators to give us information in our feedback systems we can neither tell if we are progressing nor take adaptive action if we are not.

3. Trying to control the uncontrollable, plan the unplannable. In an uncertain world, there is a temptation to plan even more, trying to cover all contingencies. We need to plan less but better. The only thing the same level and size of complexity as the world is the world itself. Trying to describe it in micro terms is futile and wastes time. As is planning the timing and precise form of outcomes – which are by their nature an emergent phenomenon subject to other actors in a context of global dynamic change. It would be more useful to be clear about why we do what we do, what we want to achieve and how we think it might be enabled to happen. Decide what we want to do, aim at it and keep a good eye on how it’s going. With useful indicators (point 2) we can get information to help us make adjustments and take advantage of opportunities as we go along (point 1) rather than annually laboriously assessing and planning.

The paradoxical thing is that we are a research organization. We produce research to improve the way other people do their work. So you would think that we would be on the front line to read research produced by other people to improve the way we do our work. Right? No, actually. There is loads of literature out there about managing and strategizing in situations of complexity — about half a century’s worth I think — but we still plan and strategize as though the world could be controlled. Iguess our leadership realises this. That is why they are investing in a large group of us to participate in an Outcome Mapping workshop this week. Watch this space…